Most agencies start Reddit monitoring the same way. A keyword alert. A Slack notification. A shared Notion doc. It works for the first client. Then the second client comes on. Then the fifth.

By client ten, the setup that worked fine for one brand is producing hundreds of weekly alerts, routing them to the wrong team members, and mixing client A’s brand mentions with client B’s competitor intel. The monitoring system is running. The monitoring is not.

Reddit monitoring at agency scale requires a different architecture than Reddit monitoring for a single brand. The tools need to be different. The keyword strategy needs to be different. The reporting structure needs to be different. And the internal workflow that connects detection to decision to client deliverable needs to be built deliberately, not inherited from a single-client setup that outlived its usefulness.

This guide covers how to build a Reddit monitoring operation that holds at twenty clients, delivers consistent insight, and doesn’t require a full-time resource to keep from collapsing under its own alert volume.

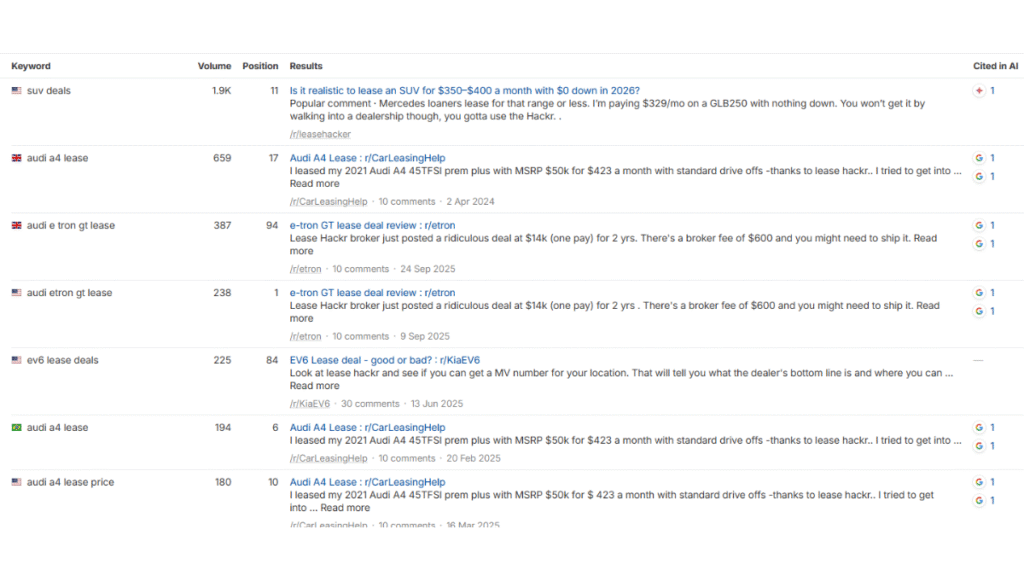

Reddit Belongs in Your AI Visibility Stack, Not Just Your Social Listening Stack

Before getting into the architecture, it helps to understand what you’re actually monitoring for. Social listening and AI visibility monitoring overlap at the tool level, but they are different programs with different KPIs.

Social listening tracks brand mentions, sentiment, and community conversation for reputation and customer insight. AI visibility monitoring extends this to track which Reddit threads are actively feeding AI retrieval systems: which threads rank in Google for queries your clients care about, which are being cited in ChatGPT or Perplexity responses, and where your clients have citation gaps against competitors.

Reddit accounts for more than 40% of Perplexity’s top-cited sources, more than 20% of Google AI Overview citations, and more than 10% of ChatGPT citations. When someone asks an AI engine about a brand or product category, the answer gets built from Reddit threads. Agencies that only run the social listening version of this program are delivering the easy half.

The architecture below covers both. The reporting structure makes the distinction explicit to clients.

Why Single-Client Reddit Monitoring Setups Break at Scale

The core failure mode is alert fatigue. A monitoring tool configured with fifteen brand-adjacent keywords across an unrestricted subreddit set will produce dozens of daily alerts for a single client. Multiply that by ten clients and the monitoring system becomes a noise machine that nobody has time to triage properly. Relevant signals get missed not because the tool failed to detect them, but because the analyst reviewing alerts gave up sorting after the first wave of false positives.

One concrete example: a monitoring setup with fifteen keywords produced 47 alerts in a single day, of which 5 were relevant. The analyst saved two to Notion and forgot about the rest. By the following week, the alert stream was being ignored entirely. The tool was running. The monitoring was not.

The second failure mode is cross-contamination. Most entry-level and mid-tier Reddit monitoring tools route all alerts to a single feed, a single Slack channel, or a single dashboard. In a single-brand setup, that’s fine. In a multi-client agency setup, the team is constantly asking “which client is this for?” before routing an alert to the right person. Brand voices get mixed. Competitive intelligence for one client gets confused with another.

The third failure mode is reporting. Reddit monitoring data does not naturally organize itself into the format a CMO or account manager needs to see. Raw alert feeds and unfiltered mention counts are not client deliverables. Agencies that skip building a structured layer between the tool and the report sometimes spend hours manually assembling outputs from data that was never organized for that purpose.

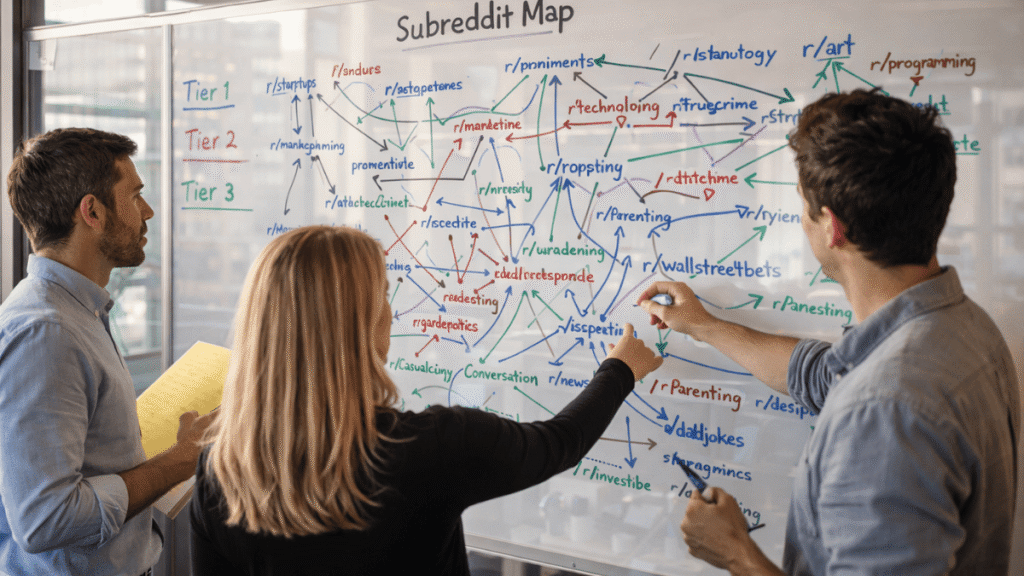

The Foundation: Subreddit Mapping Before Keyword Configuration

The most common agency mistake in Reddit monitoring setup is configuring keyword alerts before completing subreddit mapping. Keywords without subreddit targeting produce global Reddit mentions, which include enormous volumes of irrelevant context. A brand keyword appearing in a humor subreddit, a foreign-language community, or an unrelated topic thread is noise. Subreddit targeting reduces alert volume dramatically while increasing signal quality.

Subreddit mapping for each client should identify three tiers of communities before any keyword configuration begins.

Tier 1: Direct brand subreddits. Any community specifically dedicated to the client’s brand, product, or category. These warrant full monitoring of all posts and comments, not just keyword matches, because everything happening there is relevant by definition.

Tier 2: Category subreddits. Communities where the client’s product or service category is frequently discussed, even if the brand is not the primary topic. These warrant keyword monitoring for brand mentions, competitor mentions, and category intent phrases.

Tier 3: Adjacent subreddits. Communities where the client’s target buyer participates around related topics. These warrant narrow intent-based monitoring for specific phrases that signal decision-making behavior, not broad keyword coverage.

For a B2B SaaS client, a complete three-tier map might include two or three direct category subreddits in tier 1, eight to twelve category subreddits in tier 2, and fifteen to twenty adjacent buyer communities in tier 3. The keyword configuration for each tier is different, which is why the map has to come first.

Subreddit maps can also document the moderation culture of each subreddit and the posting norms that govern what kind of participation is tolerated. A subreddit that bans any mention of commercial products requires a different monitoring posture than one that welcomes vendor participation.

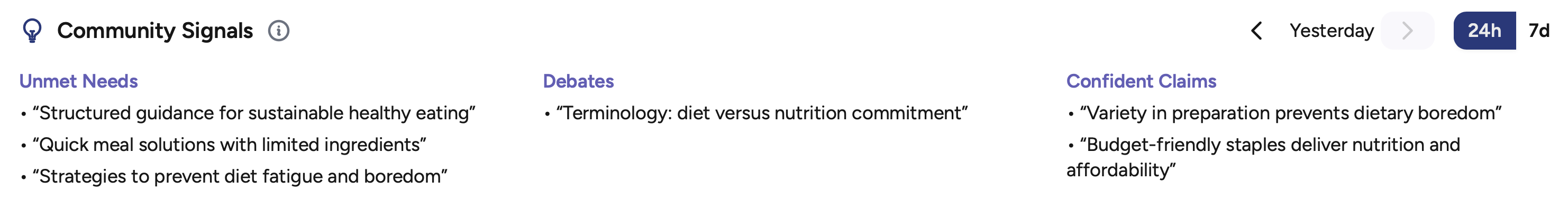

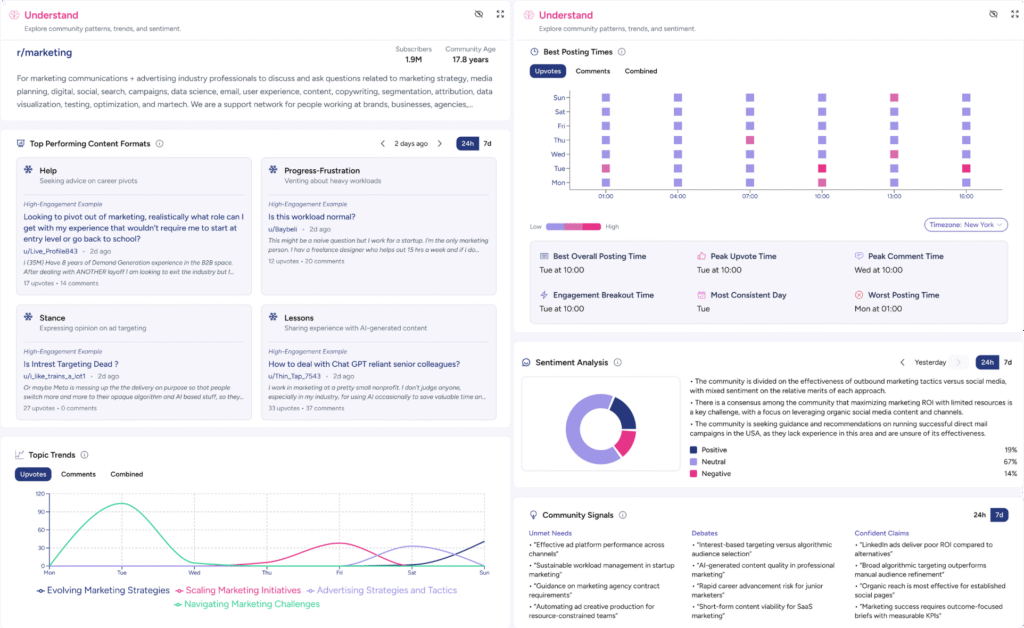

This context shapes how you interpret signals and how you advise clients on response strategy. It belongs in the map, not learned after a post gets removed. The smart way to approach this is with regular sentiment monitoring, looking for recurring topic clusters, and seeing which types of posts are expected in which communities. (We find all of that in the “understand” column of the Karmatic dashboard).

Keyword Architecture for Multi-Client Monitoring

At single-client scale, keyword configuration is straightforward: brand name, product name, key competitors, a handful of category phrases. At multi-client scale, keyword architecture becomes a strategic exercise because the configuration directly determines alert volume and signal quality across the entire agency operation.

Every client’s keyword set should be built across three distinct buckets, each with different filtering and routing logic.

Bucket 1: Brand and reputation monitoring. The client’s brand name, product names, executive names, and common misspellings. These alerts require fast routing because they include potential crisis signals alongside routine mentions. They should go directly to the account manager with the highest priority flag.

Bucket 2: Competitive intelligence. Direct competitor names, competitor product names, and phrases that signal competitive evaluation behavior: “[competitor] alternative,” “[competitor] vs,” “switching from [competitor].” These are high value but lower urgency. They inform strategy on a weekly cadence rather than requiring immediate response.

Bucket 3: Intent and opportunity signals. Category-level phrases that indicate a buyer in active research or decision mode. For a project management SaaS client, this might include “best project management tool for,” “we outgrew [category tool],” or “team looking for [capability].” These are the alerts that produce the citation and engagement opportunities that justify the Reddit monitoring program to the client.

Negative keyword exclusions are as important as positive keyword configuration. Every brand name that shares terminology with unrelated common words needs exclusion lists built at setup. Every tier 3 community needs its own exclusion set to filter context that is irrelevant to the client even when the keyword matches. Building exclusions at configuration is faster than triaging false positives after the fact.

Tool Selection for Agency-Scale Reddit Monitoring

The Reddit monitoring tool landscape in 2026 spans free utilities, purpose-built Reddit monitoring platforms, and enterprise social listening suites. For agencies managing multiple clients, the selection criteria are different from what a single brand evaluates. The features that matter most at agency scale are workspace separation, multi-client dashboard management, AI-powered intent filtering, and delivery routing that can direct different alert types to different team members without manual sorting.

Most entry-level tools fail on workspace separation. F5Bot, the most widely used free Reddit monitoring tool, provides basic email alerts with no filtering, no Slack integration, and no multi-client workspace support. It is appropriate for a single-brand setup tracking three to five keywords and nothing more. Agencies should not be running client programs from F5Bot.

The mid-tier tools that handle multi-client work most effectively offer explicit workspace separation so each client’s monitoring operates in an isolated environment with its own keyword sets, alert feeds, and reporting outputs. Tools like Octolens offer AI relevance scoring that reduces false positives significantly, Slack routing with channel-level customization, and subreddit-level targeting. Syften focuses on signal-to-noise ratio with advanced filtering by source, negative keyword exclusion, and keyword variation support that catches misspellings and abbreviations. Both are priced in a range that makes per-client billing practical.

For agencies that need to integrate Reddit monitoring into broader social listening programs that include Twitter, LinkedIn, and news coverage alongside Reddit, Brand24 provides multi-platform coverage with white-label reporting outputs, though at a significantly higher price point that only makes sense when Reddit is one component of a larger retainer.

The tool selection decision comes down to a practical test: does this tool let each client’s alerts, keywords, and reporting live in a completely isolated workspace, and does it reduce the alert stream to a manageable volume of high-intent signals before anyone on the team sees it? Tools that fail either test create operational drag that compounds with every new client added.

Building the Alert Triage Workflow

Even with the best tool configuration, an agency needs an internal workflow that converts alert detection into decisions and actions. The monitoring tool is the detection layer. The triage workflow is the decision layer. Most agencies that describe their Reddit monitoring as “not working” have a detection layer but no decision layer.

A functional agency triage workflow runs on two cadences: real-time routing for high-priority alerts and a weekly batch review for strategic signals.

Real-time routing applies to brand reputation alerts only. Any mention of the client’s brand name in a thread with significant upvote velocity, any post with clearly negative sentiment in a tier 1 subreddit, and any thread that matches crisis signal patterns should route immediately to the account manager with a response-window expectation attached. Four hours is the practical target for high-intent thread response. Reddit engagement velocity means threads peak and fall within 24 to 48 hours. A response delivered at hour 36 enters a thread that has already run its engagement cycle.

Weekly batch review applies to competitive intelligence and intent signals. These do not require immediate action, but they require structured review to be useful. The weekly review session for each client should convert the alert batch into three outputs: the top signals worth including in the client report, the threads that warrant a contribution response from the account team, and the patterns that inform the next month’s subreddit participation strategy. Without this structured review session, competitive and intent alerts accumulate unreviewed and lose the context that makes them actionable.

The triage workflow should also include a weekly alert quality check: a count of total alerts versus high-intent alerts to monitor whether the keyword and filter configuration is still performing. A healthy ratio targets 10 to 20 high-intent alerts per 30 to 80 total weekly alerts per client. When the ratio degrades, the configuration needs adjustment before the next review cycle.

Structuring Client Reporting From Reddit Monitoring Data

Reddit monitoring data presented as raw alert counts or unfiltered mention feeds is not a client deliverable. CMOs and marketing directors who authorize agency retainers need Reddit monitoring output translated into business-relevant insight. The reporting structure that converts monitoring data into something a CMO reads and acts on has three components.

Signal summary. What the most significant conversations about the client’s brand and category were this week, what the sentiment pattern looked like, and whether anything requires immediate attention. This is the one section every client reads regardless of how much time they have. No more than three to five bullet points with direct links to the threads referenced.

Competitive intelligence summary. What the monitoring detected about the client’s main competitors this week, including threads where the client was mentioned favorably in comparison, threads where a competitor was being criticized in ways the client could learn from, and threads where buyers were actively evaluating alternatives. This section informs the client’s broader positioning and is often the most strategically valuable part of the program.

AI visibility section. Which threads from the past week are ranked in Google for relevant queries and actively feeding into AI retrieval systems, which of those threads the client appears in, and which represent citation gaps where a competitor or unclaimed answer is currently occupying the AI response for a query the client should own. This section is what separates a Reddit monitoring report from a standard social listening report. It connects monitoring data to the AI visibility outcomes the client is paying to influence.

The complete weekly report should fit on one page or one screen. Reports that require more than five minutes to read will not be read consistently.

KPIs That Actually Measure Reddit Monitoring Program Performance

The weakest version of a Reddit monitoring KPI is mention volume. Mention volume measures whether a brand exists in Reddit conversations. It does not measure whether the monitoring program is producing any value from those conversations.

The KPIs that measure performance at agency scale are organized around four outcomes.

Visibility KPIs. Share of voice in tier 1 and tier 2 subreddits, thread presence on queries that return Reddit content in Google, and citation frequency across AI platforms for queries the client’s category owns. These are measured monthly rather than weekly because meaningful movement requires accumulation.

Engagement KPIs. Response time on high-intent threads, comment survival rate (the percentage of contributions that remain visible after 24 hours, which is the proxy for subreddit rule compliance and community acceptance), and upvote ratio on agency-contributed comments. A comment that gets removed immediately or consistently earns downvotes is a program quality problem, not a metric to report favorably.

Competitive intelligence yield. The number of competitive alerts per week that were included in client reporting, the number of competitive patterns identified monthly, and whether competitive Reddit signals informed any content, positioning, or product decisions. Intelligence that is detected but never acted on is not intelligence yield.

AI citation growth. The number of queries for which the client appears in an AI citation, the trend direction month over month, and the citation gap count for priority queries. This metric requires running a structured citation audit at program launch and repeating it monthly. It is the KPI that connects Reddit monitoring spend to the AI visibility outcomes that most clients consider the strategic rationale for the program.

Scaling From Five Clients to Twenty

The operational difference between managing Reddit monitoring for five clients and twenty is not the tools. The same tool stack that works at five clients can support twenty with the right configuration. The difference is process documentation and staffing model.

At five clients, a single experienced analyst can carry the subreddit mapping, keyword configuration, triage workflow, and weekly reporting for the full roster, spending roughly two to three hours per client per week. At twenty clients, that same workflow requires either a team structure where junior analysts handle triage and senior analysts handle strategic synthesis and reporting, or a significantly more automated pipeline where tool configuration does more of the signal filtering work and the human layer is reserved for interpretation and client communication.

The agencies that scale Reddit monitoring programs successfully document every component of the workflow as a repeatable process before they need to hand it off. Subreddit mapping uses a standard template. Keyword architecture follows the three-bucket structure. Alert triage runs on a written decision tree. Client reports use a locked format. When a new analyst joins the team, they should be able to run a client’s Reddit monitoring program from documentation without relying on institutional knowledge held by a single person.

Client onboarding for new Reddit monitoring programs follows the same sequence regardless of client size: subreddit mapping, keyword architecture, tool workspace configuration, triage workflow setup, then baseline citation audit, then first report. Skipping the sequence or running steps in parallel produces configuration debt that creates problems at the three-month mark when the program has accumulated enough alert history to expose setup errors.

For agencies building Reddit monitoring as a service line rather than a component of an existing retainer, monitoring alone produces intelligence value but no citation growth. Contribution strategy without monitoring has no signal base to guide where and when to contribute. The programs that produce measurable AI visibility outcomes for clients combine both, with monitoring providing the signal layer and contribution strategy acting on that signal.

If you want to see the monitoring side in action, Karmatic’s dashboard is available now with a free tier and trial. It’s built for exactly this use case: multi-client Reddit monitoring with workspace separation, intent filtering, and reporting outputs that translate to the three-component format above.

Frequently Asked Questions

How many subreddits should an agency monitor per client?

The practical range for a mid-market B2B client is 20 to 35 subreddits across the three-tier mapping structure: two to three direct category subreddits, eight to twelve category communities, and ten to twenty adjacent buyer communities. Monitoring more subreddits than this without corresponding tier-specific filtering produces alert volumes that become unmanageable. The number should be driven by where the client’s target buyer actually spends time on Reddit, which the subreddit mapping process establishes, not by what the tool’s default configuration suggests.

What is a healthy alert volume for a Reddit monitoring program?

A well-configured monitoring setup for a single B2B client should produce 30 to 80 total weekly alerts, of which 10 to 20 are classified as high-intent. This ratio assumes proper subreddit targeting, negative keyword exclusions, and AI relevance filtering are all in place. Programs producing significantly more than 80 weekly alerts per client typically have keyword configuration that is too broad, missing exclusion lists, or subreddit targeting that includes too many tier 3 communities without intent-specific keyword filters. Programs producing fewer than 30 weekly alerts may have keyword sets that are too narrow or subreddit maps that are missing relevant communities.

How should agencies separate client monitoring data to prevent cross-contamination?

Each client’s monitoring program should operate in an isolated workspace with its own keyword configuration, subreddit targeting list, alert feed, and reporting output. The most common cross-contamination failure is routing all clients’ alerts to a single shared Slack channel, which requires manual sorting before any alert can be acted on. Tools that offer explicit multi-client workspace separation are a requirement for agency-scale programs. Within each workspace, alert routing should also separate by priority tier: brand reputation alerts route to the account manager, competitive intelligence alerts route to the strategy team, and intent signals route to the contribution planning workflow.

How long does it take to build a complete Reddit monitoring setup for a new client?

A complete setup following the full sequence takes approximately five to eight hours spread across the first week: two to three hours for subreddit mapping and documentation, one to two hours for keyword architecture and exclusion list building, one hour for tool workspace configuration and testing, and one hour for establishing the triage workflow and reporting template. Agencies that skip subreddit mapping and move directly to keyword configuration save two hours at setup and spend significantly more time managing false positives for the duration of the engagement. The setup investment compounds positively over the life of the client relationship.

How does Reddit monitoring connect to AI search visibility?

Reddit threads rank in Google for an enormous range of queries, and those same threads feed the AI retrieval systems that power ChatGPT, Perplexity, and Google AI Overviews. Reddit accounts for more than 40% of Perplexity’s top-cited sources and more than 20% of Google AI Overview citations. A Reddit monitoring program that tracks which threads are actively cited by AI engines, and where a client has citation gaps against competitors, connects brand monitoring directly to AI visibility outcomes. That’s a different program than social listening, and it requires different KPIs to demonstrate the value.

How should agencies handle Reddit monitoring for clients in sensitive categories like health, finance, or legal?

Sensitive category clients require two additional layers in the monitoring workflow. First, compliance review: any contribution response drafted in response to a monitoring signal needs review against the client’s regulatory requirements before posting, which adds time to the response workflow and must be built into the four-hour response-window target as a realistic constraint. Second, escalation protocol: threads involving serious negative brand sentiment, potential misinformation, or crisis-adjacent content need a documented escalation path to the client’s legal or communications team, not just the agency account manager. Build both layers into the program at onboarding.

Can Reddit monitoring justify its cost as a standalone agency service?

Reddit monitoring as a pure intelligence service, with no contribution activity, is difficult to price as a standalone retainer because the deliverable is insight rather than action, and clients consistently undervalue insight they are not seeing translated into outcomes. The programs that sustain as standalone services are those that connect monitoring data directly to measurable outcomes: competitive intelligence that informed a positioning decision, an AI citation gap that was closed, a crisis signal that was caught early. Bundling monitoring with contribution strategy and AI visibility reporting creates a package where the monitoring feeds the actions and the actions produce the outcomes. That is a significantly more defensible service line.

What should a Reddit monitoring report include for client deliverables?

A client-ready Reddit monitoring report has three components: a signal summary (the most significant brand and category conversations of the week, no more than five bullet points with direct thread links), a competitive intelligence summary (what the monitoring detected about competitors, including favorable comparisons and active evaluation threads), and an AI visibility section (which monitored threads are ranking in Google and being cited by AI engines, and where citation gaps exist). The complete report should fit on one page. Reports that require more than five minutes to read will not be read consistently.